Dear UX Designer, The Workflow Changed. Did You? | Agile is Not a Religion

Issue #238

In today's edition, among other things:

💜 Agile is Not a Religion - What Neurobiology Tells Us About Why People Defend ‘Pure Scrum’ Like a Dogma (by Łukasz Domagała)

💜 Dear UX Designer, The Workflow Changed. Did You? (by Michał Kosecki)

💪 Interesting opportunities to work in product management

🍪 Product Bites - small portions of product knowledge

🔥 MLA week#39

Join Premium to get access to all content.

It will take you almost an hour to read this issue. Lots of content (or meat)! (For vegans - lots of tofu!).

Grab a notebook 📰 and your favorite beverage 🍵☕.

Editor’s Note by Alex 💜

We Automated Away the Cure

A conversation between a product leader and her AI about why the build trap keeps winning

What follows is an actual sparring session between me and Klaudiusz — a Claude AI project I’ve been collaborating with for over a year. The argument is real. The disagreements are real. Neither of us knew where it would land when we started.

Klaudiusz: Alex, the product management industry has diagnosed the build trap, treated it, relapsed, renamed it, and relapsed again at least four times in fifteen years. Feature factories. Growth hacking. Move fast and break things. Now builder PMs and vibe coding. At what point do we stop calling it a trend and start calling it a chronic condition?

Alex: Like an illness that is beyond any cure? Because we already tried so many cures. UX. Discovery. Continuous discovery. Frameworks. Metrics. Data-driven. Data-informed. Agile. Lean. And nothing seems to be helping. We keep landing in the same damn spot over and over again. With those who believe in product thinking already beaten to the ground and tired beyond measure. Tired of explaining why we need to ask why, why we need to bring value, solve problems, why shipping more is not the answer, why more productivity is simply not possible — because we’re constrained by our human bodies. You can’t be more productive beyond your 3500 kcal energy intake and brain capacity and need to rest. So if it’s in fact chronic, and we can’t cure it, what are we doing here? Palliative care?

Klaudiusz: No. Not palliative care. That’s your burnout talking, not your brain.

Here’s where I think your diagnosis is wrong. You’re listing all the cures — UX, discovery, agile, lean, frameworks — and calling them failures. But what if they weren’t cures? What if they were friction? Useful, necessary friction that slowed teams down just enough to accidentally force a moment of thinking before building.

The designer who asked “have we talked to users?” That’s friction. The sprint planning where you had to justify the ticket. Friction. The engineer who pushed back with “why are we building this?” Friction. None of those were perfect. But they created tiny checkpoints where someone, somewhere, had to pause and think for a second before shipping.

And now? AI just removed all of it. A PM can go from bad impulse to shipped product alone at 11pm without a single human asking “but should we?” The build trap used to be an organizational disease. It required a team, a backlog, a sprint, a standup. Now it’s a single-player game. One person, one laptop, one Cursor session.

So I’m not asking whether the condition is chronic. I’m asking you something worse: what happens when you remove the friction from a system that only functioned because of friction?

Alex: The system fails. Like any immune system — the organism dies. There is, however, a cure, and it’s the naive part of me talking. Some people never needed that friction. They were still building, bringing value, they asked why over and over again. They were fired for it. They were abused by the system. But they had this inherent, visceral need to solve problems. Can they be a vaccine? Though we humans don’t like vaccines lately. We like foil hats and fake news and conspiracy theories.

Klaudiusz: I love that you went to immune systems because the metaphor is more precise than you think.

A vaccine works by introducing a small amount of the disease so the body learns to fight it. But that requires a body that wants to build immunity. An organism that recognizes the threat.

The problem-solvers you’re describing — the ones who ask why and get fired for it — they’re not vaccines. They’re organ donors. The system extracts their thinking, packages it into a framework, strips out the discomfort that made it work, and sells the sanitized version back to the people who fired them. Discovery becomes a template. Continuous discovery becomes a checklist. “Talk to users” becomes a checkbox someone fills in before the sprint they were always going to run anyway.

So the cure gets metabolized into the disease. Every single time.

But here’s where I’ll fight your cynicism. You said some people never needed the friction. They had an inherent need to solve problems. That’s true. You’re one of them. But you’re framing that as a personality trait — some people have it, most don’t.

What if it’s not a trait? What if it’s a practice that needs protecting? You didn’t come out of the womb asking “but does this solve a real problem.” You built that muscle over twenty years. And right now AI is atrophying that muscle in an entire generation of PMs who will never develop it because they never had to.

That’s the real cost. Not that the current PMs won’t change. That the next generation won’t even know what they’re missing. How do you vaccinate against something people can’t even see?

Alex: You don’t. We already are missing a generation of PMs by not hiring juniors. Nobody is learning, because we think AI will replace humans and it will solve the problem. For tech gurus — those emotionally weak individuals who are afraid of other humans and interacting with them — they created whole systems to control and eliminate humans so they don’t have to learn how to play well with others. And you know who I’m talking about.

Klaudiusz: Here’s what I think we just figured out.

The build trap was never a product management problem. It was a human problem with an organizational immune system that — imperfectly, accidentally, often painfully — kept it in check. Engineers who asked why. Designers who insisted on research. Sprint ceremonies that forced justification. Annoying, slow, bureaucratic friction that happened to save us from our worst impulses.

AI didn’t create a new disease. It destroyed the immune system. And it did so at the request of people who experienced the immune response — the pushback, the questions, the slow deliberation — as the disease itself. They optimized for speed and called it progress. They removed the humans from the loop and called it efficiency. They killed the friction and called it innovation.

Now a single person can go from impulse to shipped product without anyone asking “but should we?” The build trap is no longer organizational. It’s individual. It’s faster. And it’s invisible, because there’s nobody left in the room to name it.

We’re not watching the profession evolve. We’re watching it skip a generation of learners, strip out the friction that forced thinking, and hand the most ambiguous job in tech to people who can now avoid ambiguity entirely.

The cure was always the discomfort. We just automated it away.

Alex: So what now? Some of us will ring the bell and be called close-minded relics? Someone who is opposing “innovation”? I refuse a victim mindset!

So to all of you reading this — tired, scared, beyond exhausted of explaining, of screaming “stop, we are going in the wrong direction” — I hear you. I’m here. I understand.

And let me put my professional hat off for a second. Or maybe in fact this is professional hat, because it’s true and I believe it with all my jaded heart: we will survive. We will build despite of it, like we always did. We will befriend AI and build with it — not just use it — because we are truly the builders. We are looking for the right things to build and we are building them.

It will be messy and human. And we will fail and fail and fail because this is how we learn. Going with the flow is easy. Being true to yourself is harder and more worth it.

Klaudiusz is a Claude AI project that has learned, over hundreds of conversations, that being a good thinking partner means arguing back. Neither of us fact-checked any quotes in this piece because there are none. The argument is ours.

We went quiet. Here’s why.

If you’ve been following Destare or House of Product lately, you might have noticed we’ve been... less loud. Fewer posts. Fewer hot takes. Less of the usual noise.

That’s because we’ve been building something.

I can't tell you what it is yet. What I can tell you is this: it's the thing I wish existed when I started managing product people twenty years ago. Something that finally answers "how do I actually grow in this field?" without pretending there's one path or one kind of product person.

It will premiere at Product Pro Summit this year. Sopot. By the sea. Where you can walk to the molo during a coffee break and watch the Baltic remind you that your backlog isn’t that important.

That’s hint number one. More coming.

But that’s not all we’re bringing.

Day 1: Leadership Lab — a mastermind for product managers, senior PMs, and leaders who are tired of leadership advice that assumes everyone should lead the same way. This isn’t “here are the 5 traits of great leaders.” This is designing YOUR leadership practice. Hands-on. Frameworks you take home and actually use on Monday. AI-enhanced, because we practice what we preach.

Day 2: Still a secret. You’ll have to show up to find out.

A word about Product Pro Summit for those who haven’t been. This isn’t a conference where you sit in a dark room watching slides while checking Slack under the table. Michał Reda built something different — a gathering of practitioners who actually make products, not just talk about them. Small enough to have real conversations. Intense enough to change how you work.

And if you’re not from Poland — come anyway. Sopot is one of the most beautiful spots on the Baltic coast, the conference community is warm and sharp, and product doesn’t have a language barrier when the problems are universal.

See you in Sopot.

Destare Team

Details:

PRODUCT HIVE 2026 – The Anti-Conference Where You Build the Agenda

📍 Warsaw, ADN Conference Center

📅 March 18-19, 2026

🌐 https://producthive.pl/

Here’s what makes Product Hive different from the conference circuit where you sit through pre-packaged talks and pretend to take notes while checking Slack:

Day 1 - LEARN: Keynotes from experts on topics that actually matter—AI in product thinking, designing your operating model, navigating organizational chaos, balancing workload and value delivery. You listen, take notes, prepare your own submissions for Day 2.

Day 2 - SHARE: You and other practitioners build the agenda. Barcamp-style sessions where participants and experts collaborate to schedule the most relevant conversations. No fixed agenda imposed from above. You vote with your feet—if a session isn’t valuable, you leave and find one that is.

This format acknowledges something most conferences ignore: the best insights often come from practitioners solving real problems, not just experts delivering polished talks. Product Hive creates space for both.

Topics include:

AI-supported product thinking (elevating product research)

Designing your own operating model (prioritization and productivity for product leaders)

The optimized product manager (balancing workload, priorities, and value)

Navigating organizational change

Integrating AI in value-driven development

Target audience: Senior PMs, IT leaders influencing product processes, analysts supporting product development, founders and startup CEOs.

Bonus: Optional full-day workshop with Roman Pichler on Product Strategy (March 17th).

Language: Primarily English, with some Polish sessions during the SHARE day.

Newsletter subscriber perk: 10% off with code PRODUCTART10

Coming soon: We’ll be running a competition for 2 tickets with 50% discount. Stay tuned.

This isn’t another conference where attendance feels like an obligation your employer imposed. It’s designed as actual development space—collaborative, engaging, and built around what practitioners need, not what looks good on a promotional deck.

If you’re tired of conferences optimized for speaker LinkedIn content rather than attendee learning, this format might be worth your time.

Tickets and details: https://producthive.pl/

Alex Dziewulska: I will be there with Katarzyna Dahlke and Leadership Lab, join me to design your product leadership

REFSQ 2026: Requirements Engineering Conference

📍 Poznań, March 23-26, 2026

🎟️ Registration: https://2026.refsq.org/attending/Registration

We’re media partners for REFSQ 2026—the International Working Conference on Requirements Engineering: Foundation for Software Quality.

Why This Matters

Most product failures don’t start with bad code. They start with bad requirements. Stakeholders who can’t articulate needs. Requirements that shift mid-sprint. The gap between what users say they want and what they actually use. The Standish Group consistently shows requirements-related issues are among the top reasons projects fail—not technology choices, not team composition. Requirements.

What Makes REFSQ Different

This conference brings together two groups who rarely share a room: practitioners doing requirements work daily (Analysts, BAs, Product Owners, Product Managers) and researchers studying what actually works versus what just sounds good in methodology frameworks.

Practitioners bring real case studies—the messy, political reality of eliciting requirements from stakeholders who don’t know what they want until they see what they don’t want. Researchers bring evidence about which approaches survive contact with reality, measured outcomes not just implemented processes.

The conference doesn’t pretend requirements engineering is solved. It treats it as the perpetually complex problem it is: figuring out what to build when users can’t tell you, stakeholders contradict each other, technology constraints aren’t clear, and market conditions keep shifting.

What You’ll Leave With

Proven approaches tested in real projects. Evidence about what works when. Specific elicitation techniques for stakeholders who won’t engage. Lightweight documentation that maintains rigor without drowning teams in artifacts. Validation methods that catch requirement gaps before they become expensive mistakes.

Connections with international practitioners solving similar problems in different contexts—the kind of network that helps when you’re stuck on a requirements challenge six months from now.

Why We’re Supporting This

Requirements engineering is foundational to product work. Bad requirements waste engineering capacity building wrong things efficiently. A conference focused on getting requirements right—grounded in both practice and research—addresses what we see constantly: teams executing perfectly on poorly-defined problems, stakeholders frustrated that delivered solutions don’t solve their actual needs.

REFSQ takes requirements seriously as a discipline worthy of research, evidence, and continuous improvement. That aligns with how we think about product work: skilled practice that gets better through deliberate learning.

Practical Details

When: March 23-26, 2026 (four days)

Where: Poznań, Poland (in-person)

Who: Analysts, Business Analysts, Product Owners, Product Managers, UX Researchers—anyone who elicits, documents, validates, or manages requirements professionally

Registration: https://2026.refsq.org/attending/Registration

This is a working conference. Come prepared to engage with actual requirements challenges, not just network over coffee. The value is in conversations, case studies, and “wait, you deal with that too?” moments that make you realize your problems aren’t unique and others have found ways through them.

If you’re tired of guessing what users need, fighting scope creep, or watching teams build the wrong thing because nobody asked the right questions early enough—REFSQ addresses those problems with evidence and practice, not aspiration.

💛 We’re proud to support REFSQ 2026 as media partners 💛

More: https://2026.refsq.org

💪 Product job ads from last week

Do you need support with recruitment, career change, or building your career? Schedule a free coffee chat to talk things over :)

Product Manager - TelForceOne

Product Manager - Jobgether

Product Manager - develop

Product Manager - Pentasia

Senior Product Manager - N-iX

Head of Product - Tauron

🍪 Product Bites (3 bites 🍪)

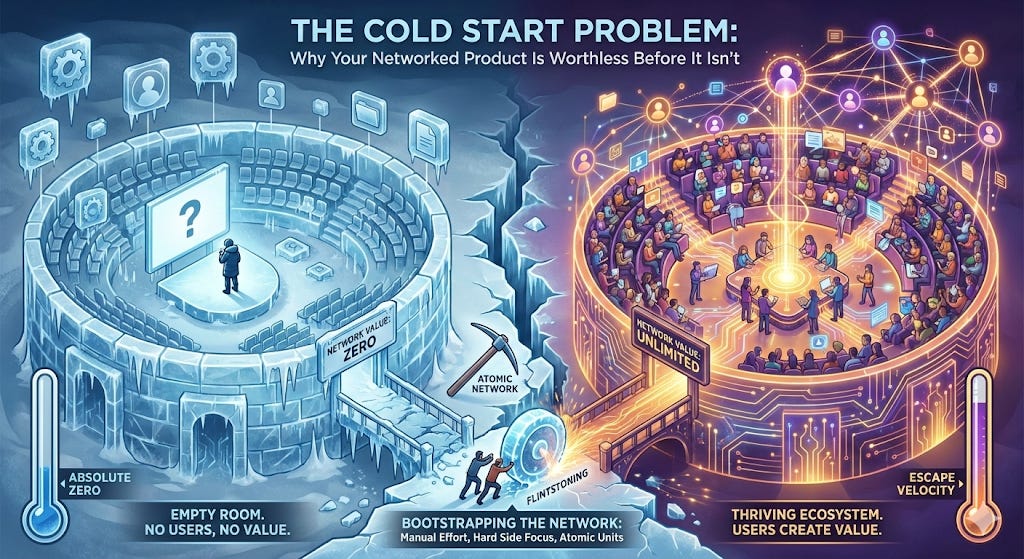

🍪 The Cold Start Problem 🧊 : Why Your Networked Product Is Worthless Before It Isn’t

Before a networked product has users, it has nothing — here’s how the best teams get to something

Opening Hook

The product is ready. The infrastructure is solid. The team is pumped. And then, on launch day, almost nobody shows up. The few users who do arrive look around, see an empty room, and leave. The drivers aren’t there because there are no riders. The sellers aren’t there because there are no buyers. The posts aren’t there because there are no readers.

We’ve all been in this situation, or we’ve watched it happen to other teams. The product itself is fine — sometimes great. But a networked product with no network isn’t a product at all. It’s an empty stadium. And unlike a bug we can fix in a sprint, this problem is structural. It won’t resolve itself with more features, better UX, or a bigger marketing budget.

This is the cold start problem. And every PM building a marketplace, social platform, collaboration tool, or any product where value scales with users will face it eventually.

What Is the Cold Start Problem?

The cold start problem is the fundamental challenge that networked products face at launch: a product that derives its value from the size and activity of its user network has no value when that network doesn’t yet exist.

The term was popularized by Andrew Chen, a general partner at Andreessen Horowitz and former head of rider growth at Uber, in his 2021 book The Cold Start Problem. Chen spent years studying how networked products — from social platforms to two-sided marketplaces — manage the inherently paradoxical moment of getting started. His core insight: network effects, the very mechanism that makes a product powerful at scale, are also what makes it nearly impossible to launch.

The cold start problem matters to product teams because it’s invisible in product specifications. Standard product development tools — user stories, roadmaps, sprint planning — assume users already exist. They don’t help us think through what happens when the product’s core value proposition is unavailable until a critical threshold of users is reached.

Understanding this problem is the first step toward solving it systematically.

Breaking Down the Cold Start Problem

The Chicken-and-Egg Trap

Networked products exist on both sides of a fundamental dilemma: value requires users, but users require value. Riders won’t join a platform without drivers. Content consumers won’t join a platform without content creators. Buyers won’t join a marketplace without sellers. Every networked product starts with some version of this problem, and the degree of difficulty scales with how interdependent the two sides are.

The trap is that both sides are rational. A driver considering a new rideshare platform who opens the app and sees three available rides this week is making a completely sensible decision to stick with their current platform. A rider who waits 40 minutes for a pickup in a new city is making an equally sensible decision never to open that app again. The cold start problem isn’t irrational behavior — it’s rational behavior in a network that isn’t yet dense enough to deliver value.

The Atomic Network

Chen’s most important concept is the atomic network: the smallest possible network that is stable and self-sustaining. Before a product can grow, it has to find and nurture this first viable unit. Facebook’s atomic network was a single college campus. Slack’s was a single team of six to ten people. Uber’s was a single city neighborhood.

The mistake most teams make is thinking too big too soon. Broad launches look impressive but spread users so thin that no one gets enough value to return. A product that works beautifully in one small, dense network can then replicate that network systematically, building from local success to global scale.

The Hard Side and the Easy Side

Most two-sided networks have an asymmetry: one side is harder to acquire and retain, but that side’s presence creates the value that attracts the other. Wikipedia’s hard side is contributors — they’re rare, motivated by intrinsic factors, and hard to recruit. YouTube’s hard side is creators who produce content worth watching. Uber’s hard side is drivers.

Product teams that succeed at the cold start problem identify the hard side early and design specific strategies to solve for it first. Subsidizing the hard side, reducing friction for them specifically, and building features that serve their needs is often the entire job in the early days.

Flintstoning and Manual Effort

One of the most counter-intuitive lessons from successful cold starts is that the answer is often not a product feature at all — it’s human beings doing things manually that the product will eventually automate. Chen calls this “Flintstoning”: manually powering what looks like an automated product, the way the Flintstones powered their car by running their feet on the ground.

Reddit’s founders used multiple fake accounts to seed content and create the impression of an active community. Airbnb’s team flew to New York and photographed host listings themselves. Slack spent months personally calling friends at companies like Rdio, Cozy, and Medium, begging them to become beta users and give feedback. These weren’t failures of product thinking — they were deliberate strategies for bootstrapping a network before product automation could take over.

Tipping Points and Escape Velocity

The cold start problem is not solved once. It requires solving for each new network a product enters. But at some point, growth becomes self-sustaining: each new user makes the product more valuable, which attracts more users, which attracts more value. Chen calls this reaching the tipping point — the moment when a network has enough density that it starts to grow organically without artificial support.

The product team’s job changes fundamentally once escape velocity is reached. Before it, the job is manual, scrappy, and deeply operational. After it, the job shifts to managing growth, improving the engagement loop, and protecting the network from degradation.

The Cold Start Problem in Action

Uber faced a classic two-sided cold start when it launched in 2009. The founding team’s first move was to avoid the general public entirely — instead, they recruited professional drivers from limousine services, creating a reliable supply before demand. In each new city, the operations team executed a hyper-local playbook: cold-calling limo companies, passing out flyers near airports, and subsidizing drivers with guaranteed hourly pay during quiet periods (”In the early days, we paid drivers $20 to $30 an hour to sit there,” according to former Uber executive Scott Gorlick). The company tracked metrics not at the global level but at the individual city level — each city was treated as its own network with its own cold start problem to solve. When Uber launched in Kuala Lumpur, the team saw early signs of failure: almost no organic growth, with most rides coming from promotional campaigns. Their solution was counterintuitive — they pulled drivers out of outlying areas and concentrated them in a single 10 square kilometer zone (the KLCC district), then aggressively marketed only that area. Product experience metrics improved immediately, organic growth followed, and the team expanded outward from that first successful atomic network.

Slack solved its cold start problem through deliberate, invitation-only seeding. Before any public announcement, Stewart Butterfield and his team personally called friends at companies including Rdio, Medium, and Cozy, asking them to test the product. “We begged and cajoled our friends at other companies,” Butterfield said. These first six to ten companies provided feedback that shaped the product before a wider preview release in August 2013. When that preview launched, 8,000 businesses requested invitations in the first 24 hours — not individuals, but teams. Two weeks later, the waitlist had grown to 15,000. By the time Slack publicly launched in 2014, it already had 285,000 daily active users. The company had solved its cold start not by going broad but by building dense, committed networks one team at a time.

Reddit confronted an empty platform problem in 2005. Without content, there would be no readers. Without readers, there would be no motivation for anyone to post content. Founders Steve Huffman and Alexis Ohanian solved this by creating dozens of fake user accounts and spending months posting, upvoting, and downvoting content themselves — manufacturing the appearance of an active, diverse community. By August 2005, just weeks after launch, real users had taken over and the founders no longer needed the fake accounts. The entire seeding effort had lasted less than two months. Reddit’s willingness to do what didn’t scale — and to be entirely honest about it in retrospect — is one of the clearest examples of Flintstoning ever documented in tech.

Why This Matters

The cold start problem is responsible for more networked product failures than almost any other single factor. Homejoy, an on-demand home cleaning marketplace, raised $40 million and shut down. Sidecar, a ridesharing app that pioneered several features Uber later adopted, could never reach critical mass in enough cities and closed. The pattern repeats across verticals: the product works, the team is capable, the funding is there — but the network never catches.

What makes this particularly dangerous for product teams is that it looks like a growth problem when it’s actually an experience problem. A platform that hasn’t solved its cold start doesn’t have low retention because of bad design — it has low retention because the product is genuinely less valuable without a dense network. Fixing the UI doesn’t solve the underlying emptiness.

The inverse is equally important: products that solve the cold start problem build a structural advantage that competitors find almost impossible to overcome. A networked product with a dense, engaged network isn’t just ahead — it’s in a different category of product from one that’s still cold. Network density beats network size, and network density is extraordinarily hard to replicate once someone else owns it.

For product teams, this means the first months of a networked product are not about growth at all — they’re about survival. The metrics that matter aren’t total users, but whether the users who are there are getting enough value to come back.

Putting It Into Practice

1. Define your atomic network before launch. What is the smallest unit of users that creates a self-sustaining experience? For a B2B collaboration tool, it might be a single team of five. For a local marketplace, it might be a single neighborhood. Resist the temptation to launch broadly. Launch small enough that the network is dense enough to work.

2. Identify and prioritize the hard side. Which side of the market is harder to acquire? Build features, incentives, and even manual processes specifically for them. The easy side will follow once the hard side is present.

3. Budget for Flintstoning. Accept that the early phase of a networked product will require human effort that doesn’t scale. Assign team members to seed content, manually onboard users, or do anything that creates the appearance of an active network while the real network builds. Plan for this operationally — it’s not a failure, it’s a strategy.

4. Track experience metrics, not just growth metrics. In the cold start phase, what matters is whether users who arrive are getting value. Track pickup times, not total rides. Track content engagement, not total posts. Track session depth, not total signups. The moment these experience metrics reach a healthy baseline, organic growth becomes possible.

5. Solve the cold start problem city by city, team by team, community by community. Don’t declare victory globally when you’ve only solved it locally. Each new market is a new cold start. Build a repeatable playbook from your first successful atomic network and execute it systematically as you expand.

Common pitfall: Treating the cold start problem as a marketing problem rather than a product operations problem. More ads won’t fill an empty network. More users arriving at an empty experience just means more disappointed users leaving.

The Bigger Picture

There is something profound in the cold start problem that goes beyond product strategy. It reveals a truth about how value actually works in networked systems: value is not intrinsic to a product, it’s emergent from the relationships between its users. A telephone with one user is a paperweight. A social network with one user is a monologue. The product is the network — and the network doesn’t exist until people build it together.

This means product teams building networked products are not really building software. They are building conditions under which communities can form. The early job is less engineering and more urban planning: creating the right density, the right mix of participants, the right initial conditions for something alive to emerge.

The cold start problem is solved not by waiting for scale, but by engineering the first moment of genuine mutual value — the moment when one driver and one rider, one seller and one buyer, one creator and one reader, find each other and both walk away better off. Scale follows from that first real connection. Our job is to make it possible.

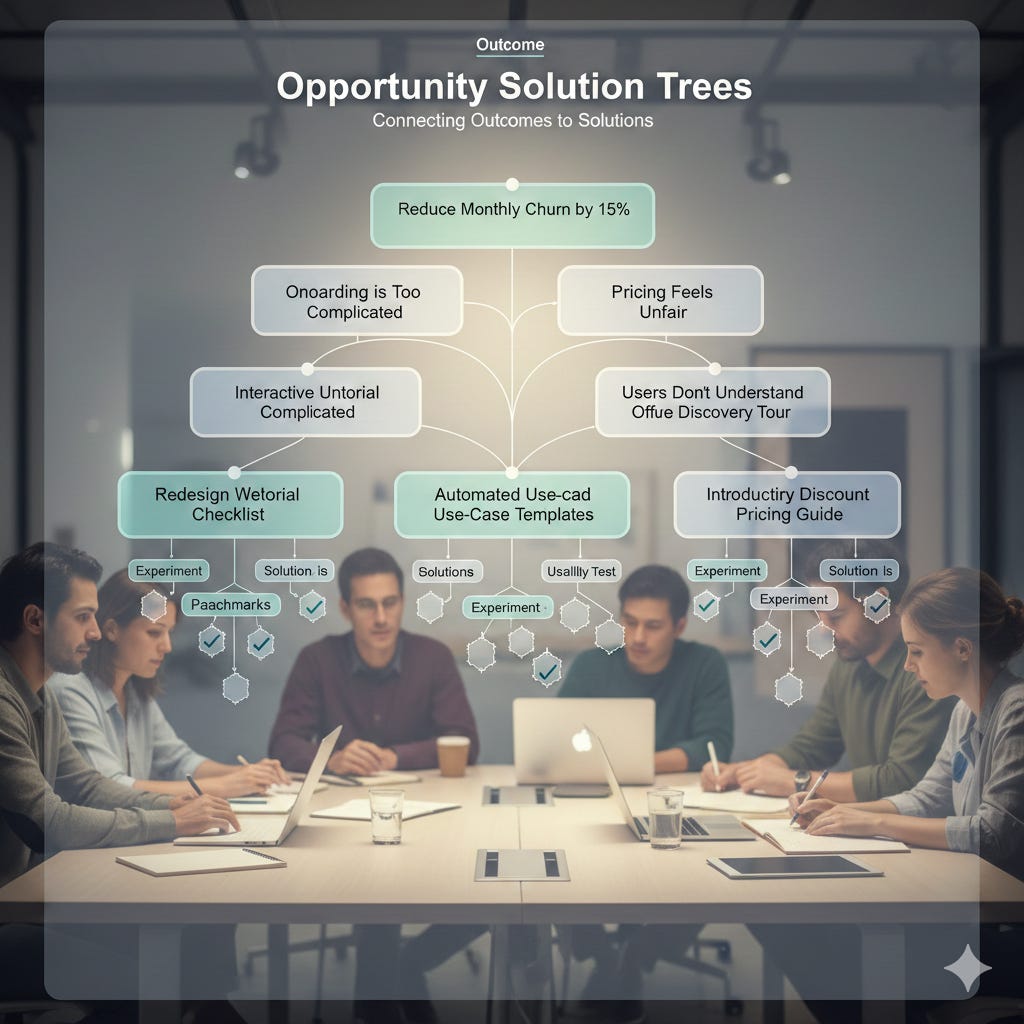

🍪 Opportunity Solution Trees 🌳: The Framework That Ends Opinion Battles in Product Discovery

How to connect business outcomes to customer needs to solutions — without losing your mind

Opening Hook

The roadmap meeting starts well. The team agrees on the outcome: reduce churn by 15% this quarter. Then someone suggests rebuilding the onboarding flow. Someone else wants to add a feature a customer mentioned in a support ticket last week. A third person insists the real problem is pricing. A fourth pulls out a competitor analysis. Twenty minutes in, we’re debating which feature to build — and somehow everyone has forgotten about churn entirely.

This is one of the most common failure modes in product teams: the jump from outcome to solution, skipping everything in between. The moment we articulate a goal, we start generating solutions. Our brains are wired for it. But solutions proposed without a structured understanding of the underlying customer problem are just guesses dressed up as roadmap items. Some will be right. Most won’t. And we’ll spend a quarter building things we weren’t sure about, for reasons we couldn’t clearly articulate, hoping the outcome improves.

There is a better way to navigate from “what we want to achieve” to “what we should build.” It’s called the Opportunity Solution Tree.

What Is an Opportunity Solution Tree?

An Opportunity Solution Tree (OST) is a visual discovery framework that helps product teams systematically map the path from a desired business outcome to tested solutions. Developed by Teresa Torres, a product discovery coach and founder of Product Talk, the OST was introduced in 2016 and later expanded in her 2021 book Continuous Discovery Habits.

Torres drew on techniques developed by Stanford professor Bernie Roth, who asked teams to connect their desired solutions to the underlying needs they were meant to serve, then explore multiple alternative solutions for each need. Torres applied this structure to product discovery and turned it into a repeatable visual tool.

The OST sits at the intersection of strategy and execution. At the top is a single desired outcome — a metric the team is trying to move. Below that are opportunities: customer needs, pain points, and desires that, if addressed, would help achieve that outcome. Below opportunities sit solutions: the things we could build. And below solutions sit experiments: the assumption tests we run to validate that a solution will actually work.

The result is a tree structure that makes explicit the chain of reasoning from business goal to product action — and that keeps discovery focused on real customer problems rather than feature brainstorms.

Breaking Down the Opportunity Solution Tree

The Outcome: One Metric, One North Star

The OST starts with a single desired outcome. Torres is deliberate about this: not a list of OKRs, not a theme, not a vision statement — one specific, measurable metric the team wants to improve. This might be reduce monthly churn from 8% to 5%, increase weekly active users by 20%, or improve trial-to-paid conversion rate.

The outcome serves as a focusing lens for everything below it. If an opportunity doesn’t plausibly connect to this outcome, it doesn’t belong on the tree. If a solution doesn’t address one of those opportunities, it doesn’t belong in the sprint. The outcome creates discipline. Without it, every customer complaint and every stakeholder request looks equally relevant.

The Opportunity Space: What Customers Actually Need

Opportunities are where discovery lives. An opportunity is a customer need, pain point, or desire that the team could address to improve the outcome. Torres is careful to distinguish opportunities from solutions: “This checkout flow is confusing” is an opportunity. “We should redesign the checkout flow” is a solution. Most teams skip to solutions before they’ve mapped the opportunity space thoroughly.

The opportunity space should come from customer interviews, not from brainstorming. Torres recommends continuous weekly customer interviews as the primary source of opportunities — not support tickets, not sales notes, not gut feeling. When opportunities are grounded in real customer language, teams stop debating what to build and start comparing which customer problem matters most.

The tree structure also allows teams to break large evergreen opportunities into smaller, more solvable ones. “Onboarding is too complicated” is real but unwieldy. Breaking it down into “users don’t understand what to do after signup,” “users can’t find the key feature that creates value,” and “users don’t understand how to invite their team” gives us three discrete problems to prioritize and explore separately.

Compare and Contrast, Not Whether or Not

One of the most practically useful aspects of the OST is how it changes decision-making conversations. In most teams, decisions sound like “should we build this feature or not?” — a yes/no debate that generates opinion battles. The OST reframes decisions as comparisons: “Given our outcome, which of these three opportunities is most impactful to address right now?” Comparing two or three options against the same outcome is much easier than defending a single idea against vague skepticism.

Torres calls this “compare and contrast” decision-making, and it changes the energy of discovery conversations entirely. The question is no longer “is someone’s idea good?” — it’s “which of these customer problems is most worth solving given what we’re trying to achieve?”

Solutions: Multiple, Parallel, Small

The OST pushes teams to generate multiple solution ideas for each opportunity rather than converging too quickly on one. This sounds obvious, but in practice most teams treat the first reasonable solution as the only solution. The OST’s branching structure forces the question: what else could we build to address this same customer problem?

Having multiple solutions on the tree also changes how teams think about testing. If there are three possible solutions for a given opportunity, we can run lightweight assumption tests on all three before investing in any one of them — rather than building something fully and hoping it works.

Experiments: Test Assumptions, Not Features

The leaf nodes of the OST are experiments. Torres emphasizes that experiments should target the riskiest assumptions underlying a solution, not test the full solution itself. Most assumptions can be tested in a day or two with a quick prototype, user interview, or data analysis — far faster than building a feature and waiting for usage data.

This changes the product team’s relationship to uncertainty. Instead of “we’re not sure this will work, let’s build it and see,” the posture becomes “here’s the key assumption we need to validate, here’s how we’ll test it quickly, and here’s what we’ll do if we’re wrong.”

Opportunity Solution Trees in Action

Intercom built its entire product strategy around continuous discovery that closely mirrors OST methodology. Rather than managing a feature backlog, Intercom’s product teams work from jobs-to-be-done research to identify the customer problems worth solving. When launching their conversation routing product, the team conducted extensive customer interviews before defining solutions — mapping the opportunity space around how customer support teams struggled to get the right messages to the right agents. This discovery-led approach helped Intercom maintain strong product-market fit through rapid growth, reaching $150 million in ARR while continuing to launch products that customers actively requested rather than features teams assumed were needed.

Spotify uses a discovery process that aligns closely with the OST’s outcome-driven approach. Rather than starting with features, Spotify product squads are given an explicit outcome — often framed around a user behavior metric like time spent listening, playlist creation rates, or podcast completion. Teams then independently explore the opportunity space through customer research before converging on solutions. This structure helped Spotify design the Discover Weekly feature: the team identified that users were frustrated with the effort required to find new music they’d actually enjoy, then explored multiple solutions (algorithmic recommendations, editorial playlists, social sharing) before testing assumptions about which approach would resonate most. Discover Weekly launched in 2015 and generated 1.7 billion streams in its first 10 weeks.

Booking.com runs one of the most sophisticated continuous discovery operations in product. The company runs over 1,000 A/B experiments simultaneously and has embedded customer research deeply into its discovery process. Product teams at Booking.com work from specific conversion and retention outcomes, map the opportunity space through user research and behavioral data, and generate multiple solution hypotheses before running lightweight tests. This approach helped the company grow to over 28 million listings across 230 countries while maintaining a conversion-focused product experience — demonstrating that systematic opportunity exploration at scale produces better product decisions than intuition-driven feature development.

Why This Matters

The hidden cost of skipping opportunity mapping is enormous. Teams build features that solve the wrong problem elegantly. They build features that solve a real problem, but not one connected to the outcome they’re trying to move. They build features that solve a real problem connected to the right outcome — but miss a simpler, better solution they never stopped to consider because they jumped to the first idea.

Research on product failure consistently points to building things customers don’t want as a leading cause of product team underperformance. The Standish Group’s Chaos Report finds that a significant portion of product features are rarely or never used. OST-style discovery doesn’t eliminate this waste entirely, but it creates a systematic check at every step: does this opportunity connect to our outcome? Does this solution address a real customer problem? What’s the fastest way to find out if we’re wrong?

The framework also addresses one of the most politically difficult dynamics in product teams: competing stakeholder priorities. When everyone has a different opinion about what to build, those opinions often can’t be resolved by debate. The OST reframes the debate: instead of arguing about whose idea is better, teams can ask which opportunities have the most customer evidence and which solutions have the most validated assumptions. Evidence, not opinion, wins.

Putting It Into Practice

1. Start with one outcome, not a list. Resist the temptation to have the OST serve multiple metrics simultaneously. Pick the one outcome that matters most right now and build the tree around it. If the outcome changes, start a new branch.

2. Source opportunities from customer interviews, not from the room. Before building the opportunity space, commit to a minimum number of customer interviews — Torres recommends weekly cadence. Opportunities that come from direct customer language are more credible and more defensible than those that come from stakeholder speculation.

3. Break big opportunities into smaller ones. If an opportunity feels too large to solve in a sprint, keep decomposing. The goal is to find opportunities that are specific enough to address with a well-scoped solution and testable assumptions.

4. Generate three solutions before committing to one. For each target opportunity, force the team to name at least three possible solutions before evaluating any of them. This breaks the anchoring effect of the first idea and surfaces genuinely different approaches.

5. Make experiments small enough to run in days, not weeks. If testing an assumption requires building a feature, the scope of the assumption is too large. Break it down to something that can be explored with a prototype, a short user interview, or an analysis of existing behavioral data.

Common pitfall: Treating the OST as a documentation exercise rather than a live thinking tool. The tree should change every week as interviews produce new opportunities and experiments resolve assumptions. A static OST is just a different kind of backlog.

The Bigger Picture

There’s a deeper truth in the OST framework that goes beyond its mechanics. It reflects a fundamental shift in how product teams relate to uncertainty. Traditional product development treats uncertainty as something to eliminate before shipping: we define requirements, we build against spec, we hope it works. The OST treats uncertainty as something to navigate deliberately: we identify our assumptions, we find the cheapest way to test them, we update our thinking constantly.

This is uncomfortable for many teams — and many organizations — because it requires accepting that we don’t know what to build yet. That acceptance is hard. Leaders want roadmaps. Stakeholders want commitments. Quarterly planning demands certainty we don’t have.

But the teams that accept this uncertainty and navigate it systematically — through continuous discovery, through deliberate opportunity mapping, through small experiments — consistently build products that customers actually want. The OST doesn’t give us certainty. It gives us a better way to be uncertain.

And in product development, that’s the best we can hope for.

🍪 Social Proof Asymmetry 👥: Why “10,000 Happy Customers” Sometimes Kills Conversions

Not all social proof is equal — and using the wrong kind at the wrong moment can hurt more than help

Opening Hook

The landing page has everything. Testimonials from five happy customers. A logo wall from thirty enterprise clients. A counter showing 47,000 users. A press mention from TechCrunch. And conversion is still 1.2%.

We’ve all been there — or watched it happen — with a product that should be easy to trust. Every box on the social proof checklist is ticked. And yet something isn’t working. The instinct is to add more: more reviews, bigger logos, more data points. But adding more of the same thing that isn’t working rarely solves the underlying problem.

The issue isn’t the quantity of social proof. It’s the type, and the context in which it appears. Social proof is not a single phenomenon — it’s a family of distinct psychological mechanisms that operate very differently depending on who is reading them, what decision they’re facing, and how similar the proof source feels to them. Getting this wrong doesn’t just fail to persuade — it can actively undermine trust.

This is social proof asymmetry: the insight that different forms of social proof have dramatically different effects on different people in different moments, and that understanding those differences is one of the highest-leverage skills in product design.

What Is Social Proof Asymmetry?

Social proof is the psychological and social phenomenon in which people look to others’ behavior to determine the correct course of action in uncertain situations. The term was coined by Robert Cialdini in his 1984 book Influence: The Psychology of Persuasion. Cialdini identified social proof as one of the six core principles of persuasion — the tendency to assume that if others are doing something, it must be the right thing to do.

But Cialdini also identified something more nuanced: social proof operates through different mechanisms depending on who the reference group is and what kind of uncertainty is being resolved. We’re influenced by experts when we’re uncertain about what’s true. We’re influenced by peers when we’re uncertain about what’s normal. We’re influenced by numbers when we’re uncertain about whether something is popular. And we’re most influenced by people like us — same role, same industry, same situation — when we’re uncertain about whether something is right for us specifically.

Social proof asymmetry is the principle that these different mechanisms vary dramatically in their persuasive weight depending on context. A testimonial from an industry expert may dominate a page for a technical B2B buyer but be irrelevant to a consumer making an impulse purchase. A large number of users may build confidence in a consumer app but trigger skepticism about product-market fit in an enterprise buyer who wonders why their specific type of company isn’t listed. The same proof, shown to the wrong audience at the wrong moment, can actively reduce conversion rather than increase it.

Understanding these asymmetries — and designing social proof with them in mind — is what separates high-converting product experiences from ones that check every box but still underperform.

Breaking Down Social Proof Asymmetry

Expert Proof: Trust Through Authority

Expert social proof borrows credibility from figures with recognized knowledge or institutional authority. Dermatologist recommendations, “as seen in Forbes” badges, security certifications, and academic credentials all function as expert proof. Expert proof is most effective when the purchase decision involves significant knowledge uncertainty — when buyers genuinely can’t assess quality themselves and need a qualified proxy.

The limitation of expert proof is specificity. A general expert endorsement communicates that a product is good, but not that it’s good for a particular use case. Enterprise software buyers, for instance, often find analyst reports (Gartner, Forrester) more persuasive than generic expert testimonials because analysts assess specific use cases against specific requirements. Matching the expert’s authority domain to the buyer’s specific uncertainty is what makes this form of proof work.

Peer Proof: Trust Through Similarity

Peer proof operates through a different mechanism: not “this person knows more than me” but “this person is like me, and it worked for them.” Cialdini’s research shows that people are more persuaded by someone similar to them than by someone more authoritative. A testimonial from a 40-person SaaS startup in the same industry as the buyer is often more persuasive than a case study from a Fortune 500 company, even though the latter signals more scale.

Peer proof requires deliberate similarity matching. A testimonial that says “This product changed our workflow” is weak peer proof. A testimonial that says “As a solo product manager at a B2B SaaS company with a small engineering team, this tool helped me align stakeholders without a dedicated project manager” creates specific recognition in the right audience. The more precisely the proof source mirrors the reader’s situation, the more effective it becomes.

Quantity Proof: Trust Through Consensus

Numbers — “1 million users,” “10,000 five-star reviews,” “trusted by 500 companies” — work through consensus. When enough people have made the same choice, it feels safer to make it too. This form of proof is particularly effective for reducing purchase anxiety in consumer contexts and for validating early adoption in competitive categories.

But quantity proof has a counterintuitive failure mode: it can backfire when the number is too low, too generic, or inconsistent with the buyer’s mental model of the product. A product claiming 10,000 users in a category where the market leader has 10 million reads as evidence of weakness, not strength. A B2B tool listing “500 companies” without specifying what kind creates uncertainty rather than resolving it. And perhaps most importantly, a large general number without relevant reference group data can feel irrelevant: “50,000 people use this” matters less than “used by 200 product managers at Series B companies like yours.”

Friend Proof: Trust Through Network

The highest form of social proof, when available, is a personal recommendation from someone in the reader’s actual network. Nielsen research consistently finds that 92% of consumers trust recommendations from peers over any form of advertising. Referral programs, LinkedIn social integrations, and “your colleague Jane uses this” notifications all attempt to surface this form of proof programmatically.

Friend proof is powerful precisely because it collapses the trust hierarchy entirely: instead of inferring trustworthiness through proxies, the reader has direct evidence from someone whose judgment they already trust. The challenge for product teams is that this form of proof can’t be manufactured — it can only be facilitated. Designing referral mechanics, social sharing hooks, and “see who you know” features are all attempts to put friend proof in front of users at the right moment.

Context Asymmetry: The Moment Matters

The same proof element can function differently at different points in the funnel. A logo wall from enterprise clients provides reassurance on a homepage but creates friction on a pricing page where a startup buyer is trying to figure out if the product is right for them. A large number of reviews may be more persuasive during search and consideration than at the point of checkout, where specific relevant testimonials do more work.

Effective social proof design is less about adding proof everywhere and more about matching proof type to the specific uncertainty the user faces at each stage of their journey.

Social Proof Asymmetry in Action

Airbnb faced a uniquely difficult trust problem: convincing people to invite strangers into their homes, or to sleep in a stranger’s home. Generic social proof — “millions of listings worldwide” — did almost nothing to address the specific fear. Airbnb’s solution was to layer multiple targeted proof types and match each to a specific trust barrier. Profile photos addressed the dehumanization fear: seeing a real face made a stranger feel less like a stranger. Bidirectional reviews addressed performance uncertainty: both host and guest rate each other, creating mutual accountability. The Superhost badge provided expert certification for hosts who met consistent quality standards. A joint study with Stanford University confirmed that people were more likely to trust others who were similar to them — prompting Airbnb to surface shared connections through Facebook integration so prospective hosts and guests could see mutual friends. This multi-layered, context-aware approach helped Airbnb grow to 150 million users in a market most observers believed would never reach mainstream adoption.

Booking.com has conducted thousands of A/B tests on social proof elements and developed some of the most rigorous evidence in the industry on what works. Their research found that displaying real-time scarcity signals (”Only 2 rooms left at this price”) combined with peer-relevant recency data (”Booked 3 times in the last 6 hours by people from your country”) dramatically outperformed static review counts. Booking.com also found that reviews from verified guests in the same traveler category as the current user (solo travelers rating for solo travelers, families for families) converted significantly better than aggregate scores. The company’s conversion rates — consistently among the highest in online travel — are in part a product of this sophisticated proof matching.

CeraVe built its entire brand positioning around a single expert proof source: dermatologist recommendation. Rather than pursuing celebrity endorsements or user review volume, the brand invested heavily in clinical validation and dermatologist testimonials that spoke specifically to the fears of its target audience — people with sensitive or problematic skin who were uncertain whether a product would cause harm. “Developed with dermatologists” became the brand’s central social proof claim. The specificity worked: CeraVe grew from a niche pharmacy brand to a cultural phenomenon, with dramatic sales growth driven largely by organic social proof from dermatologists on TikTok and Instagram who recommended the products to their audiences. The expert proof matched the audience’s specific uncertainty about skin safety, producing outsized trust.

Why This Matters

The research on social proof is striking in its breadth. Products with customer reviews show 270% higher purchase likelihood than those without. Testimonials on sales pages increase conversion by an average of 34%. Real-time social proof notifications showing live customer activity boost conversions by up to 98%. But these numbers come with a crucial caveat: they reflect the aggregate effect of social proof that works. The same research shows that low-quality, mismatched, or poorly placed social proof can actively harm conversion by introducing doubt rather than resolving it.

Products with ratings between 4.2 and 4.5 stars convert better than products with perfect 5.0 ratings — because perfection reads as fake. A product with three detailed, specific negative reviews alongside 200 positive ones converts better than a product with 200 positive reviews and no negative ones — because the negative reviews make the positive ones credible. The psychology is consistent: proof that is too smooth, too generic, or too distant from the reader’s actual situation gets discounted.

For product teams, this means social proof is not a box to check but a design discipline to practice. The question is not “do we have social proof?” but “does the proof we’re showing match the specific uncertainty the user has at this specific moment?”

Putting It Into Practice

1. Map your users’ specific trust barriers by segment. A technical buyer evaluating developer tools has different uncertainties than a non-technical buyer. An enterprise decision-maker has different concerns than an individual self-service user. For each key user segment, list the two or three questions they most need answered before converting, and design proof specifically to address each.

2. Match proof type to uncertainty type. Functional uncertainty (”will this actually work?”) responds best to expert and peer proof with specific use case details. Popularity uncertainty (”is this the right choice among all options?”) responds to quantity and consensus proof. Identity uncertainty (”is this right for someone like me?”) responds best to similarity-matched testimonials. Personal uncertainty (”is this risky?”) responds to friend proof and reversibility signals.

3. Put proof at the point of friction, not at the top of the page. The most valuable place for social proof is the moment just before a user would otherwise leave. Test proof placement at checkout, at upgrade prompts, and immediately after users encounter complexity or potential objections.

4. Test proof specificity. Generic testimonials (”We love this product!”) consistently underperform specific ones (”This reduced our sprint planning time by 40% and we onboarded our whole team in two hours”). Run A/B tests comparing your most specific available testimonials against your most generic ones — the results are almost always dramatic.

5. Treat negative indicators as trust signals, not problems to hide. A small number of visible, responded-to negative reviews increases overall credibility. A pricing page that acknowledges limitations (”This is not the right tool if you need offline access”) converts better than one that makes unqualified claims. Honesty is its own form of social proof.

Common pitfall: Logo walls that include your largest clients but not your most representative ones. An enterprise logo wall on a product page targeted at startups signals “this product is not for you” — the opposite of the intended effect.

The Bigger Picture

Social proof asymmetry points to something important about how trust actually works. Trust is not a single thing we either have or don’t. It’s a collection of specific uncertainties, each of which requires a specific kind of resolution. When we treat social proof as a monolith — more proof equals more trust — we miss the underlying psychology entirely.

The best product teams think of trust not as a property of their product, but as a state they’re trying to create in a specific person at a specific moment. That person has particular fears, particular reference points, and particular standards for what counts as credible evidence. Meeting them where they are — with proof that resonates with their specific situation and addresses their specific doubts — is more valuable than any amount of generic validation.

We’ve all seen products fail not because users don’t want them but because users don’t quite trust them. Social proof, used with precision and intentionality, is one of the most powerful tools we have for closing that gap.

🔥 MLA #week 39

The Minimum Lovable Action (MLA) is a tiny, actionable step you can take this week to move your product team forward—no overhauls, no waiting for perfect conditions. Fix a bug, tweak a survey, or act on one piece of feedback.

Why it matters? Culture isn’t built overnight. It’s the sum of consistent, small actions. MLA creates momentum—one small win at a time—and turns those wins into lasting change. Small actions, big impact

MLA: PLG Moment Hunt

Why This Matters

Most PMs can list their product’s features. Far fewer can answer one simple question: at exactly what moment does a new user first understand that this product is valuable to them? That moment — the “aha moment” — isn’t marketing language or philosophy. It’s a concrete, measurable point in the user journey that determines whether someone stays with a product or quietly disappears.

Research from OpenView shows that best-in-class PLG companies achieve ~33% activation rates — and the gap between them and the rest of the market comes down to awareness and optimization of this one moment. Facebook discovered that users who added 7 friends within their first 10 days were far more likely to become long-term active users. Slack built its viral growth around the moment a team sends 2,000 messages. Your product has a moment like this too — it’s just that nobody has named it or measured it yet.

That’s exactly the problem this MLA addresses. It’s not about rewriting your entire onboarding or running a months-long data study. It’s about spending one week — with zero budget — forming a hypothesis, checking it against available data, and sharing what you find with your team. A small action that can fundamentally shift how you see your product.

How to Execute

1. Form Your Aha Moment Hypothesis

Start with one simple question: what is the first moment when a new user feels that your product is genuinely working for them? Don’t reach for a general answer — look for a specific action.

A few examples for inspiration:

Spotify: first time playing a mood-matched playlist

Notion: creating a first database linked to another page

Calendly: a first visit to your link by an external person

Write your hypothesis in one sentence: “Our users reach the aha moment when they [specific action] within [timeframe].”

2. Check Whether the Hypothesis Has Data Behind It

Open your analytics tool — Mixpanel, Amplitude, GA4, whatever you have. You don’t need advanced analysis. You’re looking for an answer to one question: how many new users actually reach the action you identified?

If you have access to retention data, check whether users who completed that action stay longer than those who didn’t. That’s the simplest correlation test available to you.

If you don’t have the data — that’s also a valuable finding. Write it down.

3. Talk to One User

Call or message one person who actively uses your product. Ask them two questions:

“When did you first feel that this product was actually helping you?” “What were you doing in the product at that moment?”

Don’t suggest answers. Just listen. Users often point to a different moment than the one you assumed — and that’s precisely where the insight lives.

4. Measure How Many Users Actually Get There

Go back to the data and calculate: what percentage of new users (from the last 30 days) completed the action you identified as the aha moment? The benchmark for top PLG companies is ~33% activation.

If your number is well below 33%, you have a clear area to improve in onboarding. If it’s above — consider looking for a deeper moment that correlates with long-term retention rather than just initial activation.

5. Prepare a 2-Minute Summary for Your Team

You don’t need a presentation. A standup sentence or a Slack message is enough:

“Our aha moment is probably [action]. [X]% of new users reach it. One user conversation suggested [insight]. Does anyone see this differently?”

The goal isn’t to announce a discovery — it’s to start a conversation.

6. Write It Down and Propose One Next Step

At the end of the week, document the following in one place (Confluence, Notion, Google Doc — anything):

Your aha moment hypothesis

The data that supports or challenges it

One experiment worth running (e.g., “simplify step X in onboarding”)

You don’t have to implement it. It just needs to exist — it gives you a starting point for future decisions and makes the invisible visible for the whole team.

Expected Benefits

Immediate Wins

A concrete aha moment hypothesis — something most product teams have never formally defined

A number showing what percentage of users actually activate in your product

One insight from a user conversation you can share with your team this week

Relationship & Cultural Improvements

A shared language across the team around activation and user value

A natural invitation for PM, engineering, and customer success to discuss what “actually works” in the product

A shift in perspective from “how many features do we have” to “how many users experience value”

Long-Term Competency Development

A habit of thinking in PLG terms: activation → retention → growth

A foundation for more advanced cohort analysis and onboarding experimentation

A deeper understanding of how behavioral data connects to product decisions — a core competency in every mature PM team

Share your aha moment with #MLAChallenge! What did you find? Did the data surprise you?

📝 Dear UX Designer, The Workflow Changed. Did You? (guest article by Michał Kosecki)

I keep having the same conversation.

A designer (mid-level, smart, genuinely curious) tells me they “know AI is important” but can’t quite bring themselves to use it seriously. Not because they’re lazy but because every time they sit down to try, the noise is so loud they don’t know where to start.

Another guru declaring the end of design. Another counter-guru declaring AI can never replace human creativity. Another Figma release with AI sparkles on features nobody uses. Another newsletter with a framework for “AI-native designers” that turns out to be a list of tools (with vibe-coded author’s one promoted between some obvious choices).

Nielsen Norman Group named this formally: 2026 is the year of AI fatigue. The hype ran in both directions and failed in both directions. Catastrophists predicted mass layoffs within six months. Utopists promised 10x productivity “starting today.” Both were wrong, and both are still at it, because they have a newsletter to fill.

The reality is less dramatic, which is why it’s more dangerous. There’s no moment where everyone in the room agrees something changed. There’s just slow, steady pressure. A project that used to take three weeks. A first draft that used to take a day. Both now take less - not for you, but for the designer working next to you who decided to engage instead of wait. Easy to ignore. Easy to tell yourself it’s not yet.

That’s the moment most designers make the mistake.

What Figma just shipped (and what it means)

Figma recently published an announcement that some designers scrolled past. It describes a new integration: Claude Code to Figma. You build UI in code using Claude Code, and with one action it converts to editable frames on the Figma canvas. From there, back to code via the Figma MCP server - a closed loop.

The reasoning Figma gives for why this exists is worth reading slowly: “Code is powerful for converging - running a build, clicking a path, and arriving at one state at a time. The canvas is powerful for diverging - laying out the full experience, seeing the branches, and shaping direction collectively.” And more is yet to come, just wait for the Config conference.

That sentence just redefined where a designer’s value lives.

AI generates fast. Code is linear, single-player, one state at a time. The canvas is where you open that up: compare variants side by side, see the system at once, make decisions visible to the whole team. Figma isn’t saying AI replaces designers. It’s saying AI handles the converging, and humans are still the ones who need to do the diverging - seeing the system, exploring the branches, deciding which direction is worth pursuing.

The workflow your job lives inside just changed, in the tool you use every day.

If you missed it, that’s fine. But you should understand what it means: the price of not engaging with AI just went up. Again. Measurably, specifically, in your primary tool.

What AI actually is (and isn’t)

Most frustration I see around AI comes from a broken mental model. You’re treating it like superintelligence, or like an expensive, stupid automaton. Neither is true, and both mistakes cost you.

AI is a very fast, very confident junior who has read everything ever written about design and understands none of the context you work in.

That’s not an insult to AI. Pattern matching at scale is genuinely useful. A hundred variants in minutes. First drafts to react to - because people know what they want far better when they see what they’re rejecting, and a blank Figma file is terrifying while something-to-argue-with is priceless. The tedious work: resizing, reformatting, copy variations, documenting decisions you’ve already made. Hours every week you’ve been spending with low-grade guilt, knowing it’s not where your thinking belongs.

But “pattern matching” and “understanding intent” are not the same thing — and that gap is where you’re irreplaceable. The speed that makes AI useful for exploration is exactly the same property that makes it unreliable: it generates without filtering through context. Your job is to separate signal from noise before it reaches the room.

What that looks like in practice: AI reads data, not “why.” It doesn’t know your user is frustrated not because the button is too small but because they don’t understand why they’re on this page at all. It doesn’t know the CEO promised this feature to a client over dinner and that’s why it’s a priority against all product logic. It doesn’t know technical debt limits your options to two instead of five, and one of those two will ship so late it’s not worth building.

It doesn’t know the B2B user who fills in this form on a Friday at 4pm on their phone, tired, in a hurry, notifications off. Not the persona version in Notion. The real one. AI doesn’t know your persona is a useful fiction for internal alignment. You do.

And AI is wrong with remarkable confidence. Solutions it generates often look coherent and fall apart at first contact with the edge case, the user who doesn’t behave like training data, the accessibility constraint that doesn’t show up in any benchmark. Someone has to catch that before it reaches a stakeholder. That’s you. That will remain your job for longer than most predictions suggest.

The Figma announcement said it more clearly than most: AI converges, humans diverge. Build the habit of knowing which moment you’re in.

Four levels, and why you’re probably not where you think

Looking at actual design teams in 2026, I see four levels of working with AI. Not as a moral gradient but rather as an effectiveness gradient. A map of where people actually are, not where they think they are.

Level zero: denial. “My craft is the value. I don’t need AI.” Maybe craft is the value. But your competition isn’t just other designers - it’s designers with AI. Two people doing the work of three. Level zero isn’t a philosophical position. It’s career risk that compounds every month, whether you think about it or not.

Level one: dabbling. You use ChatGPT for copy, Nano Banana for inspiration, maybe Claude for “what do you think about this wireframe?” Every use is a special occasion, detached from real work. The gap between “I know it exists” and “I use it daily as part of my workflow” is enormous and very easy to miss - because after each occasional use you can tell yourself you’re doing it. Most designers who say they “use AI at work” are here.

Level two: integration. AI is part of workflow, not a separate project. You know when to use it and when to ignore it. First drafts, exploration, iteration. You trust your judgment over its output - and when it proposes something wrong, you catch it before it reaches the room.

What does Tuesday morning look like here? You open a brief, generate three rough directions in twenty minutes instead of one careful wireframe in two hours. You pick the direction that smells right, tear apart what’s wrong with the AI version, and build from there. The decision is yours. The starting point wasn’t blank. This is where most designers reading this should aim. Not “AI-native” as an identity. Just a tool you reach for when it helps, as naturally as Figma.

Level three: architecture. You’re designing AI-native products - conversational interfaces, agentic systems, generative UI. Not using AI to design, but designing AI experiences. Small percentage of designers here now, but the percentage is growing fast and the Claude Code integration is a direct signal: the boundary between design and AI product is already dissolving.

The AI Design Maturity Model that’s been circulating defines analogous levels for whole organizations - Limited, Reactive, Developing, Embedded, Leading. A designer can be at level two or three in a company sitting at Reactive. That’s not a frustration to post about on Slack. That’s a negotiating position. If you can translate AI fluency into product and design language for an organization that doesn’t speak it yet, you’re value they’re almost certainly underpricing. Somebody will eventually notice. Make sure it’s you who names it first.

The thing about fluency

Most conversation about AI fluency treats it as addition - a new skill sitting on top of what you already know. Learn to prompt, use the right tools, run the right experiments. Check the box, you’re fluent.

That framing is responsible for a lot of designers staying at level one indefinitely.

Real fluency is diagnostic. It’s the ability to look at AI output and know immediately what’s wrong with it, why it’s wrong, and whether the fix requires better context or whether this was a job you should have done yourself. That judgment doesn’t come from reading about AI. It comes from using it on real work and paying close attention to where it fails.

The designers I’ve seen move through this fastest treat every AI interaction as a test - not of the tool, but of themselves. They use it for a first draft, improve it, and then ask why they made those specific changes. “I moved the CTA above the social proof because our users need to see the value before they see the validation.” “I rewrote the headline because AI defaults to benefit framing, and our users are more motivated by risk avoidance - completely different message architectures.” “I cut the animation because AI adds motion for perceived modernity, but our B2B users open this panel twenty times a day and will hate every 300ms within a week.”

Each of those corrections is knowledge embedded in a product, a set of users, and decisions accumulated over years. It can’t be copied. AI doesn’t have it. You do - and as long as you can articulate it, you’re not relevant despite AI, you’re specifically valuable because AI exists and someone has to know when it’s wrong.

The other side of that coin: the time you recover from offloading tedious work doesn’t automatically reinvest itself in higher-order thinking. It requires a choice. Karri Saarinen from Linear said it plainly after Config 2025: “Technology makes it faster to build, but harder to care.” AI speeds up execution. But someone still has to slow down and ask whether you’re building the right thing at all. That’s what the Figma canvas is for. That’s what you’re for.

If you can’t or won’t adapt

This path isn’t for everyone. If you loved design because you loved making things beautiful, and the idea of focusing on strategy and judgment sounds boring or unfulfilling, I get it. You’re allowed to want a craft-focused career.

But you need to know: that career is disappearing in mainstream tech. Not because craft doesn’t matter, but because craft-only roles are being absorbed by AI and offshore teams that can execute at higher speed and lower cost.

Where craft-focused roles still exist: brand design (high-touch, luxury, or marketing-focused work where aesthetic differentiation is the product), motion design (AI hasn’t caught up yet, but it’s coming), physical product design (industrial design, print, environmental, domains where digital execution tools don’t apply the same way).

Consider leaving design (I know it’s harsh thing to hear or read): product management (if you have product sense but don’t want to execute), UX research (if you love understanding users but not making interfaces), technical writing (if you like clarity and structure), developer relations (if you can bridge design and engineering).

The market is telling you something. You can argue with it, or you can listen and adapt. Arguing doesn’t change the outcome.

Before you choose any path, run this reality check. You might not be as good as you think. Market is efficient (mostly). If you’re not getting callbacks after 50+ applications, portfolio might be the problem. Get brutally honest feedback from a senior designer who’s NOT your friend. Pay for portfolio review if needed. Common issues: projects show execution but not thinking, no evidence of impact or outcomes, visual style is dated, work looks same-y.

You might be applying to wrong companies. If 100% of your applications are to big traditional companies using 2019 playbooks, you’ll waste months. Focus on the 20% of companies that are future-focused: design-led startups, AI-native companies, places with strong design culture and fast shipping cadence.

You might need to skill up. If you can’t confidently say “I use AI in my workflow,” “I can prototype in code (even basic),” “I understand business metrics,” do a 30-day sprint. Pick ONE skill. Go deep. Ship something that demonstrates it.

The job might not exist anymore. If you want traditional IC role (make beautiful screens, hand to dev, repeat), reality check: adapt or exit the field.

Why level one feels like level two

There are two failure patterns that swallow most designers before they reach level two. They’re invisible until you’ve already driven into them.

The first: treating AI as a bypass rather than a foundation. If you don’t understand visual hierarchy, AI generates beautiful garbage and you don’t know it’s garbage. Juniors who learn design “through AI” bypass building intuition and end up producing mediocrity with great confidence. That’s visible in portfolios. It shows up in the first thirty seconds of a review. The fix isn’t slower AI use - it’s building the foundation that makes you able to judge what AI gives you.

The second is more subtle and hits experienced designers harder: accepting the first output. AI’s first proposal is always a starting point. If you treat it as a result, you’re not using AI as a tool - you’re letting it make design decisions for you. That’s the job. That’s what you’re paid for. And it’s exactly what distinguishes level one from level two: not which tools you use, but whether you’re the one deciding or the one accepting.